Memory That Moves: Why Autonomous AI Agents Must Own Their Memory

Autonomy stalls when agents can’t remember. A memory-first architecture turns one-off chatbots into autonomous knowledge networks that compound.

If you’ve ever watched a great team ship fast, you’ve seen the secret ingredient: they remember.

A startup we know lost a $200K deal because their AI agent repeated a misconfigured API call it had already failed with three times that week — because it had no memory of the first failure.

Not in the “we have a wiki somewhere” sense. In the muscle memory sense. They recall what worked, what broke, who to ask, what the customer actually meant, and which edge case bit them last time. That recall compresses time. It turns the next decision into a reflex instead of a debate.

AI agents are heading in the same direction. But there’s a catch: most agents today are brilliant goldfish. They can reason, plan, write, code—then forget it all by the next session. We’ve built brains with no hippocampus. And we act surprised when autonomy stalls.

Here’s the thesis: the speed of insight grows with the speed of recall. The startups that endure won’t just “use agents.” They’ll build systems where agents can own, curate, and evolve their own memory—forming what I’ll call autonomous knowledge networks.

From One-Off Chatbots to Autonomous Knowledge Networks

A single agent with a long context window is impressive. A fleet of agents that share and refine memory is something else entirely.

Think of a company as a living organism:

- Individual agents are like neurons—specialized, fast, and local.

- Tools and APIs are the sensory and motor systems.

- Memory is synaptic strength: it determines what signals travel easily and what gets ignored.

An autonomous knowledge network is what happens when agents don’t just act—they learn as a system. They store experiences, promote useful patterns, demote noise, and consolidate lessons into stable knowledge.

Without that network, autonomy is mostly theater. The agent can propose actions, but it can’t improve its judgment. It can’t build “instinct.” It can’t compound.

This is why memory is not a feature you bolt on. It’s an architecture choice.

Memory Is a Product Surface, Not a Storage Layer

Most “memory” discussions get stuck in implementation: vector databases, embeddings, chunking strategies, retrieval scores. Important, yes. But memory-first design starts earlier:

What is memory for an agent?

In biology, we don’t store everything. We store what predicts survival. Memory is selective, shaped by reward, repetition, and relevance. Your brain doesn’t keep a full-resolution recording of Tuesday; it keeps the parts that will matter on Thursday.

For AI agents, memory should be similarly intentional. A practical taxonomy:

- Episodic memory: what happened (events, tool calls, outcomes, surprises).

- Semantic memory: what’s true (facts, product knowledge, system constraints).

- Procedural memory: how to do it (workflows, playbooks, successful prompts, code patterns).

- Social memory: who prefers what (stakeholder preferences, customer context, “don’t ping me on weekends”).

{

"type": "procedural",

"content": "Validate env var FOO_TOKEN before deployment",

"source": "incident-2026-03-01",

"confidence": 0.95,

"created_at": "2026-03-01T14:32:00Z"

}Now the key shift: agents should own their memory.

Owning memory means the agent can:

- Write: capture outcomes and rationales (“we tried X, it failed because Y”).

- Read: retrieve the right memory at the right time, not just “top-k similar chunks.”

- Edit: correct or deprecate stale memories (“this API changed; stop doing that”).

- Consolidate: merge repeated experiences into stable abstractions (“customers churn when onboarding takes > 2 days”).

If memory is just a passive database behind the scenes, you’ll get the worst of both worlds: noisy retention and brittle retrieval.

The agent will also invent “memories” to fill gaps. That’s not neural plasticity—it’s a memory leak.

Memory is a product surface because it shapes:

- user trust (“why did you do that?”),

- compliance (“who can see this?”),

- debugging (“what did you remember when you made that call?”), and

- performance (“how quickly can you reuse prior work?”).

Founders: treat memory like you treat auth. Not optional. Not hand-wavy.

The Compounding Advantage: Recall Turns Into Resilience

Startups rarely lose because they can’t generate ideas. They lose because they can’t repeat the right moves under pressure.

Memory-first agents change the game in three compounding ways.

1) Faster cycles: fewer repeated mistakes

In engineering, we write postmortems not to punish, but to prevent recurrence. Agents need the same mechanism.

A memory-owning agent can store:

- “This deployment failed when env var

FOO_TOKENwas missing; validate before release.” - “User X always wants examples; include a short snippet.”

- “When the DB returns 429, backoff with jitter.”

This is procedural memory becoming muscle memory. The next time, the agent doesn’t “rethink” the basics. It just executes.

2) Better judgment: pattern recognition across episodes

Humans build intuition by seeing many similar situations. Agents can do this too—if they have a mechanism to generalize.

This is where memory consolidation matters. Sleep is the biological metaphor: your brain replays the day, strengthens useful connections, and prunes the rest.

For agents, consolidation might look like:

- nightly summarization of task traces,

- promotion of repeated observations into “rules of thumb,”

- demotion of one-off weirdness,

- explicit tagging of uncertainty (“this was true as of March 2026”).

Investors: if a team says “our agent learns,” ask where the learning lives.

3) Organizational continuity: knowledge survives churn

People change roles. They leave. They forget. Slack threads vanish into the abyss.

A memory-first agent can become a continuity layer:

- preserving the rationale behind decisions,

- capturing institutional constraints,

- maintaining a living map of systems and dependencies.

That’s not replacing humans. It’s preventing your company from reliving the same quarter every quarter.

What Memory-First Architecture Looks Like (In Practice)

Let’s get concrete. You don’t need sci-fi. You need discipline.

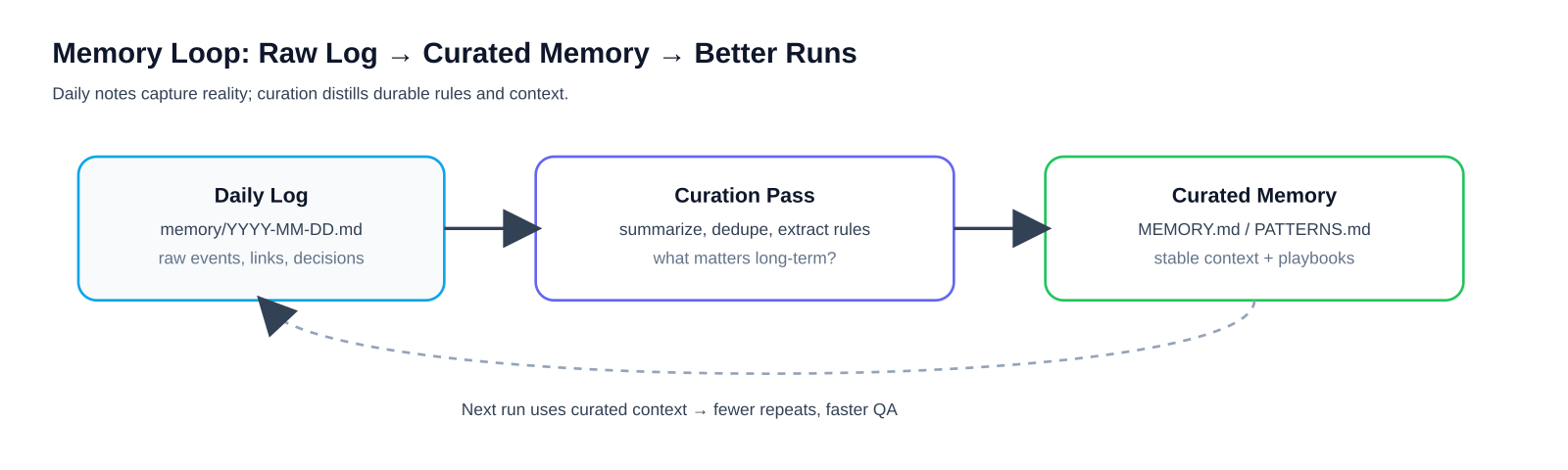

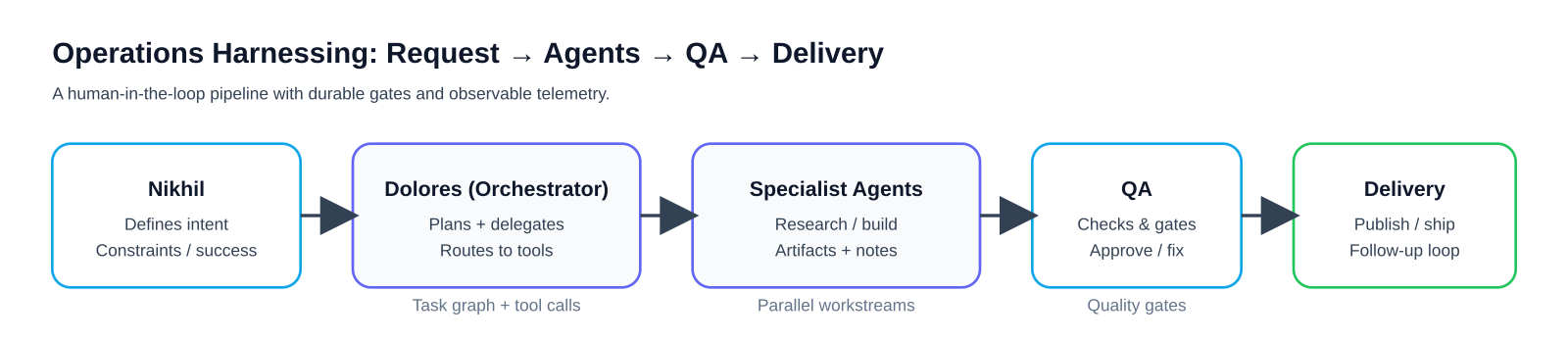

The minimum viable memory loop

A memory-owning agent should have a repeatable loop:

- Observe: log actions, tool results, and outcomes.

- Select: decide what’s worth remembering (with heuristics + feedback).

- Store: write to the right memory type (episodic/semantic/procedural).

- Retrieve: pull memories based on task intent, not just similarity.

- Validate: check memories against current reality (APIs, docs, user state).

- Consolidate: periodically summarize and prune.

If your agent does steps 1–3 but not 4–6, you’re building a hoarder, not a learner.

Real-world analogies that map cleanly

- Git for memory: memories have versions, diffs, and deprecations. “We used to do X; now do Y.”

- Cache hierarchy: hot memories (recent tasks) live close; cold memories live deeper. Retrieval is latency-aware.

- SRE runbooks: procedural memories are executable playbooks with triggers and guardrails.

Guardrails: autonomy needs boundaries

Memory without governance is how you end up with a very confident agent repeating a very wrong assumption.

Practical safeguards:

- Scopes and permissions: what an agent can remember, and where it can write.

- Attribution: every memory stores source, timestamp, and confidence.

- Auditability: you can answer, “What did the agent know when it acted?”

- Forgetting: retention policies and deliberate deletion (the healthy kind of memory loss).

Yes, forgetting is a feature. Even your brain prunes synapses. Startups should too.

The Investor Lens: What to Look For in “Agentic” Startups

If you’re evaluating an agent-centric platform, don’t get dazzled by demos. Ask memory questions:

- Is memory first-class in the product? (UI, APIs, policies)

- Can agents edit and consolidate memories, or only append?

- Is retrieval intent-aware? (task goals, tool context, user identity)

- How does the system prevent memory drift? (validation, time-bounding, confidence)

- What happens at scale? (multiple agents, conflicting memories, role-based views)

The most resilient startups will treat memory like a compounding asset. Everyone else will keep paying the compounding cost of repeated failure—one avoidable mistake at a time.

Closing: Build for Plasticity, Not Just Performance

We’re entering an era where competitive advantage won’t come from having an agent.

It will come from having agents that remember well—selectively, safely, and usefully.

Neural plasticity isn’t about storing more. It’s about adapting faster. The same is true for autonomous systems.

If your agents can own and evolve their memory, they can build instincts, recover from shocks, and keep improving even as the environment shifts.

And if they can’t? You’ll have a very talkative intern who asks the same question every morning.

(No offense to interns. At least they don’t crash because of a memory leak.)

Key Takeaway

If you want autonomous agents that actually improve over time, treat memory as something the agent can own—not just a database your system queries.

Teams that build intentional memory loops (write, retrieve, validate, consolidate) get compounding reliability and speed. Teams that don’t end up re-learning the same lessons under new pressure.

Metadata

Title

Memory That Moves: Why Autonomous AI Agents Must Own Their Memory

Alt titles

- Memory-First AI: The Secret to Autonomous Startups

- When Agents Remember – Startup Resilience Reimagined

- Neural-Inspired Memory for AI Agents: A New Growth Engine

Meta description

Most AI agents are brilliant goldfish — they reason, plan, and act, then forget everything by the next session. This post makes the case for memory-first architecture: why the most resilient startups will build systems where agents own, curate, and evolve their own memory.

5 social bullets

- Most AI agents forget everything between sessions. Here’s why that’s a startup killer.

- Memory-first architecture: treating agent memory like auth — not optional, not hand-wavy.

- The 4-type memory taxonomy every agent platform should implement (episodic, semantic, procedural, social).

- The compounding advantage: agents that remember make fewer mistakes, build better judgment, and preserve institutional knowledge.

- Investor lens: 5 questions to ask any “agentic” startup about how their agents remember.